PICK YOUR SUPPORT STYLE

MONTHLY SUPPORT

Reader

$5/mo

Contributor

$15/mo

Architect

$50/mo

Recurring subscriptions auto-bill monthly via Stripe Checkout. Cancel anytime from the receipt email.

TikTok For AI Research

New multimodal embedding models, TikTok for keeping up with AI research, and Claude gets a 1 million context window upgrade

tl;dr

- New multimodal embedding model from Google

- TikTok for keeping up with AI research

- Claude gets a 1 million context window

Releases

Multimodal embedding models

Embedding models allow us to compare the “vibe” of two pieces of content, which is useful in contexts where you don’t know the specific keywords you are looking for when searching for a document.

Historically, good embedding models only acted upon one modality, like text or image, but did not interoperate between them. A multimodal embedding model could, in theory, allow us to upload an image of a flower, and allow you to find a poem about flowers, a video explaining how to take care of flowers, or a podcast talking about the best smelling flowers from your database.

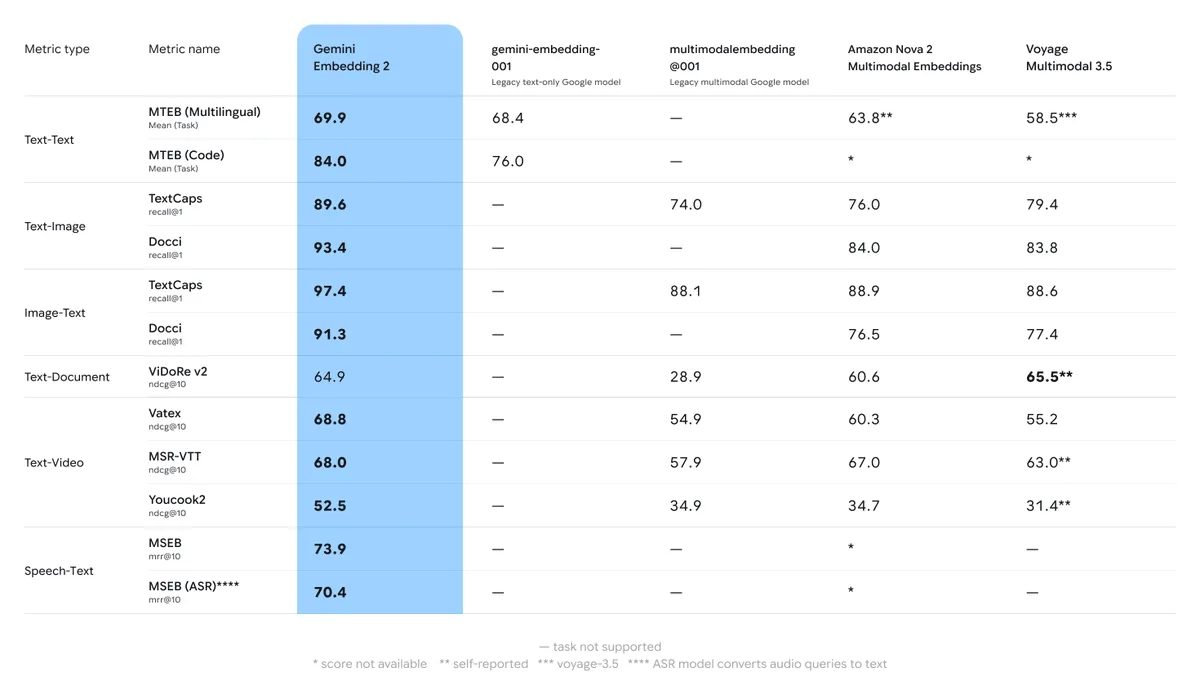

Gemini Embedding 2

Gemini started the week with their Gemini Embedding 2 model.

Despite only comparing against other multimodal embedding models, it also does extremely well against the domain specific ones as well, being in the top 5 when compared against the current best text only open source models.

Like we said at the start, there are very few multimodal embedding models to compare against, so they easily take the top spot for all other categories.

MixedBread WholeEmbed V3

Within two days Google had some competition, with search startup MixedBread AI (great company name) releasing the WholeEmbed V3 model.

It seems to be better for many use cases than Google’s model, but that does not mean they are necessarily the same.

Google’s endpoint is a traditional one: you give it your content and it returns a vector (an array of numbers like [1.12, 2.97, -3.2, 0.49]) which you can then use with any vector database that you like.

MixedBread on the other hand do not give you back a vector. They maintain the search engine themselves, so you just upload data into and then can query it. This is nice if you want a fully managed solution, but lacks the flexibility that Google gives you. They also are (probably) using a late interaction embedding model, which means that search latencies will be high as well.

Quick Hits

Research paper tiktok

There are hundreds of AI research papers that get released every week, making keeping up with all of them a full time job.

To help combat this, Alphaxiv has released a TikTok style app called Briefs that shows you relevant papers with summaries (one paragraph, and one page).

Claude 1 million context

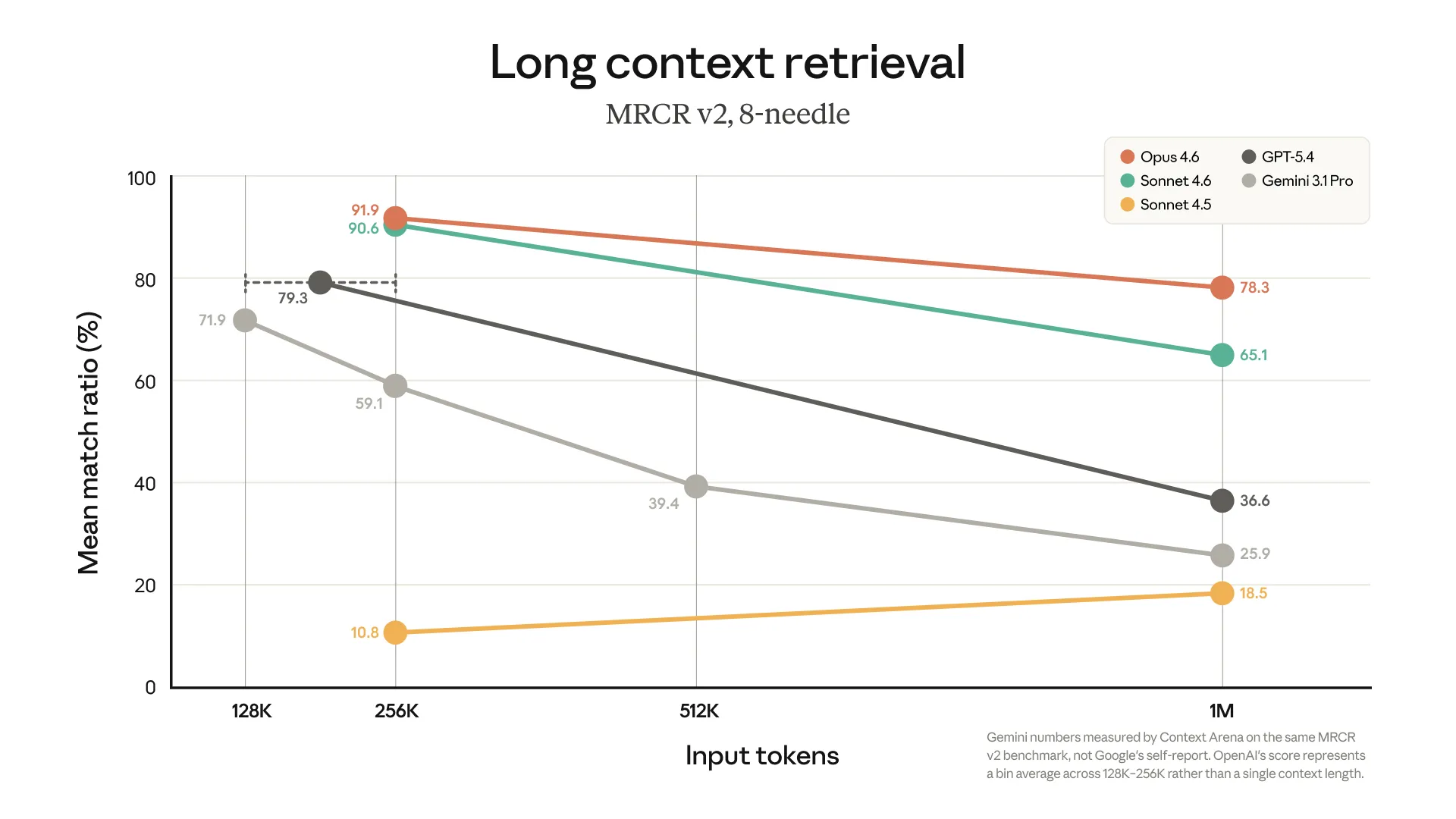

Anthropic has had 1 million context window limits in a beta mode for the last few months.

This week they decided they were happy with what they were seeing and decided to move it out of beta and make it generally available.

Based on their benchmarks (needle in the haystack style retrieval), they outclass both Gemini and GPT at long context tasks.

They also have strayed away from increasing prices for long contexts; OpenAI and Google both charge double for tokens over 256K, but Anthropic have either found a way to keep prices down or are just willing to eat the extra cost to show up the other labs. Either way, the consumers win.

Finish

I hope you enjoyed the news this week. If you want to get the news every week, be sure to join our mailing list below.

Stay Updated

Subscribe to get the latest AI news in your inbox every week!