PICK YOUR SUPPORT STYLE

MONTHLY SUPPORT

Reader

$5/mo

Contributor

$15/mo

Architect

$50/mo

Recurring subscriptions auto-bill monthly via Stripe Checkout. Cancel anytime from the receipt email.

We can measure slop

Measuring the effects of AI on a codebase over time, and a GitHub PSA

Research

Slop Code Bench

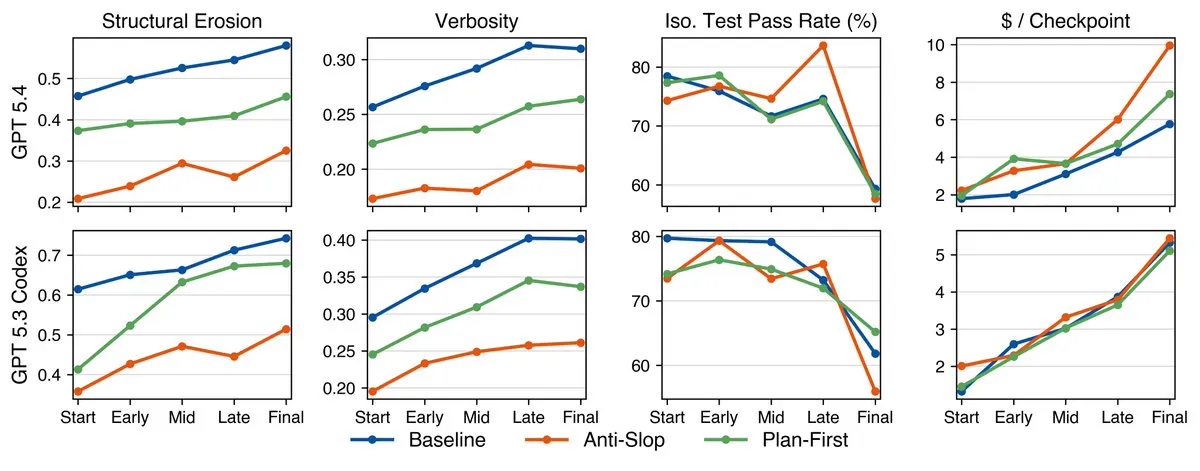

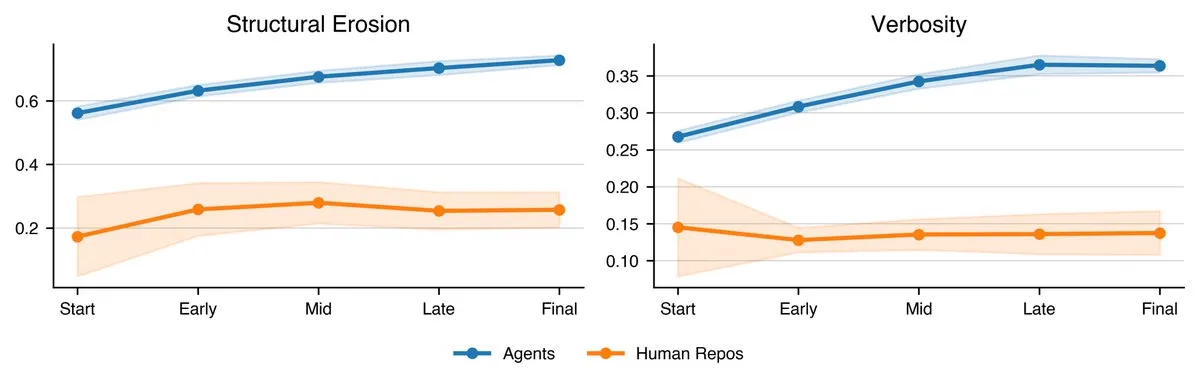

I have been looking for a good benchmark that shows what happens to codebases when building them with vibe coding and I have finally found one, called Slop Code Bench.

They find that across all metrics that they measured, across 20 codebases with up to 8 (sequential) tasks, coding performance decreases. This decrease in quality can be measured in terms of code structure, verbosity, test pass rate, and cost.

They also were testing models in their “natural habitats”, ie Claude Code or the Codex CLI, and also tried different prompting techniques, including instruction on how to prevent sloppy code or adding in a planning phase at the start as well. These prompting techniques were able to make the code better at the start, but they still did not stop the decrease in code quality as more and more features were added by the AI.

I hope to see this benchmark expand even more and be updated with new models, and if they do you will be seeing me reference it when new models are released.

Quick Hits

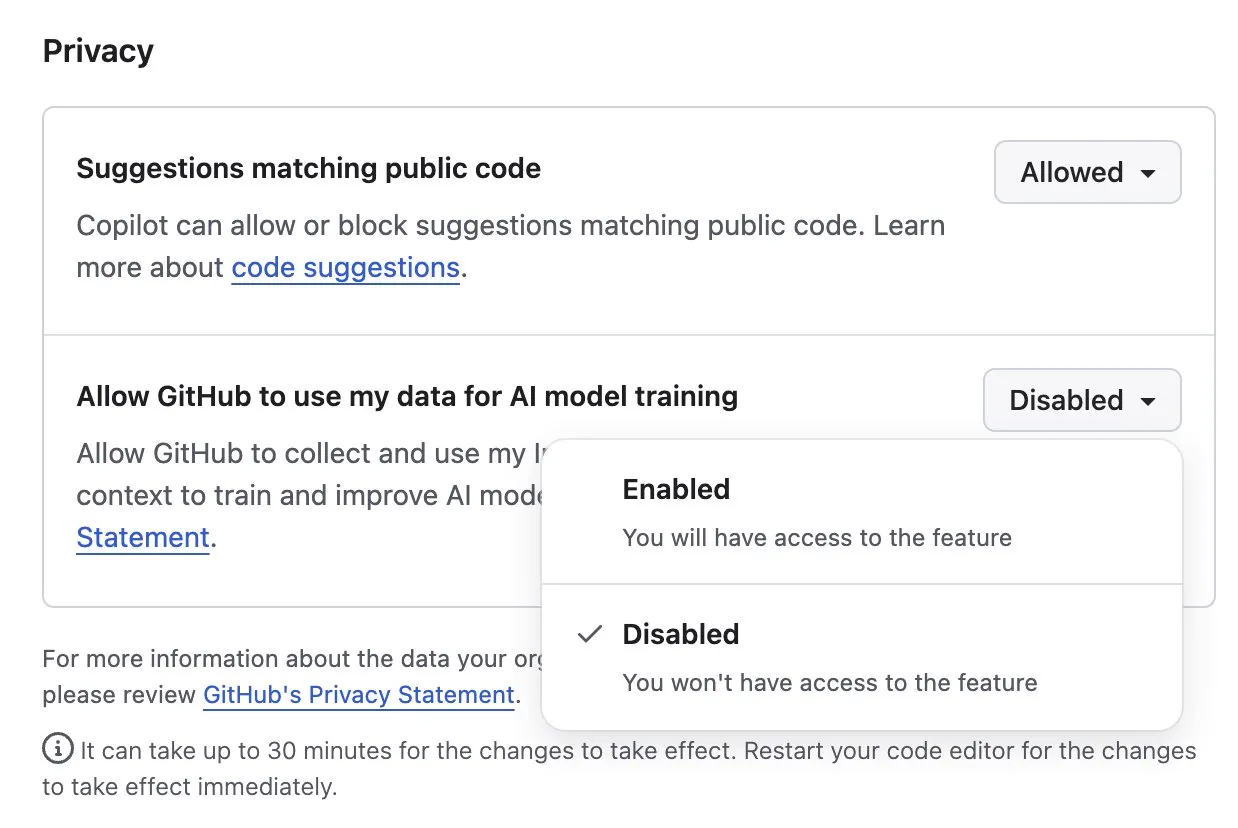

GitHub trains on your data

A PSA for those that use GitHub: by default they use your repo data to train their models with.

To turn it off, go to Settings > Copilot > Features > Privacy and set Allow GitHub to use my data for AI model training to disabled.

Finish

It was a quiet week for AI news. I hope you enjoyed and can spend the extra time catching up with previous releases. If you want to get the news every week, be sure to join our mailing list below.

Stay Updated

Subscribe to get the latest AI news in your inbox every week!