PICK YOUR SUPPORT STYLE

MONTHLY SUPPORT

Reader

$5/mo

Contributor

$15/mo

Architect

$50/mo

Recurring subscriptions auto-bill monthly via Stripe Checkout. Cancel anytime from the receipt email.

Is Opus the GPT 5.1 Killer?

Claude Opus 4.5 vibe check, Flux2 and Qwen image releases, and how good are LLMs at astrophysics

Correction: I had erroneously said that the Claude Code $20 plan had access to Opus 4.5. It does not, Opus 4.5 uses the API instead of your Claude Code subscription when using it on the $20 plan.

tl;dr

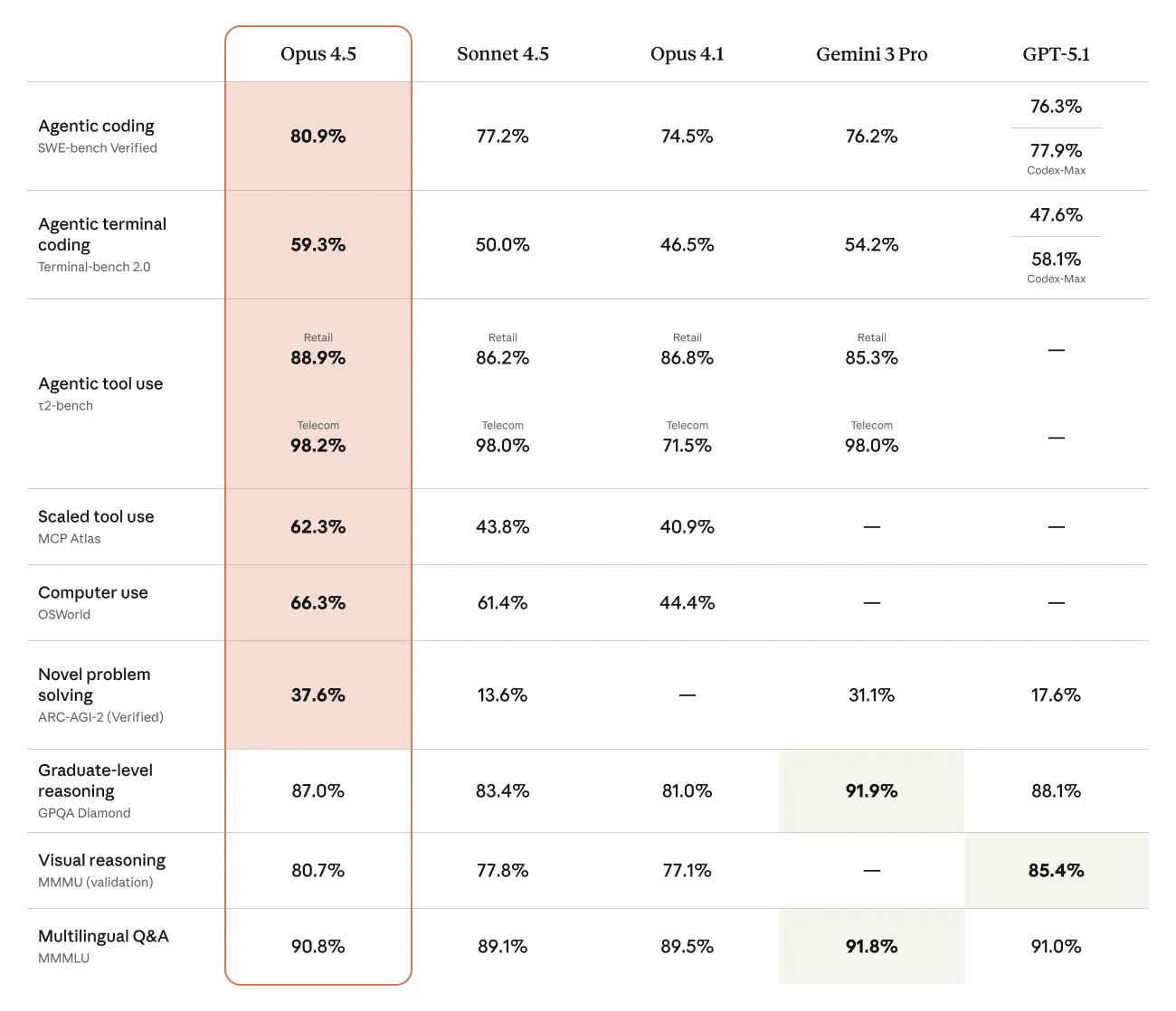

- Opus 4.5 has arrived, can it beat GPT 5.1?

- Flux and Qwen both release new image generation models

- How good are LLMs at astrophysics?

Releases

Claude Opus 4.5

The largest model in the Claude family has gotten its long awaited refresh, with Opus 4.5 being released this week.

Before we get into model quality, we need to talk about the much more interesting update to the model outside of its capabilities, which is its pricing.

Previous Opus models were arguably the best models for their time, but their extremely high price made it not worth it to use them. This changes with the Opus 4.5 release, as Anthropic has gone and made Opus 3x cheaper than it had been previously.

| Model | $ per million (input) | $ per million (output) | Tokens per second |

|---|---|---|---|

| Claude Sonnet 4.5 | $3 | $15 | 57 |

| GPT 5.1 | $1.50 | $10 | 34 |

| Gemini 3 Pro Preview | $2 | $12 | 80 |

| Claude Opus 4.1 | $15 | $75 | 29 |

| Claude Opus 4.5 | $5 | $25 | 64 |

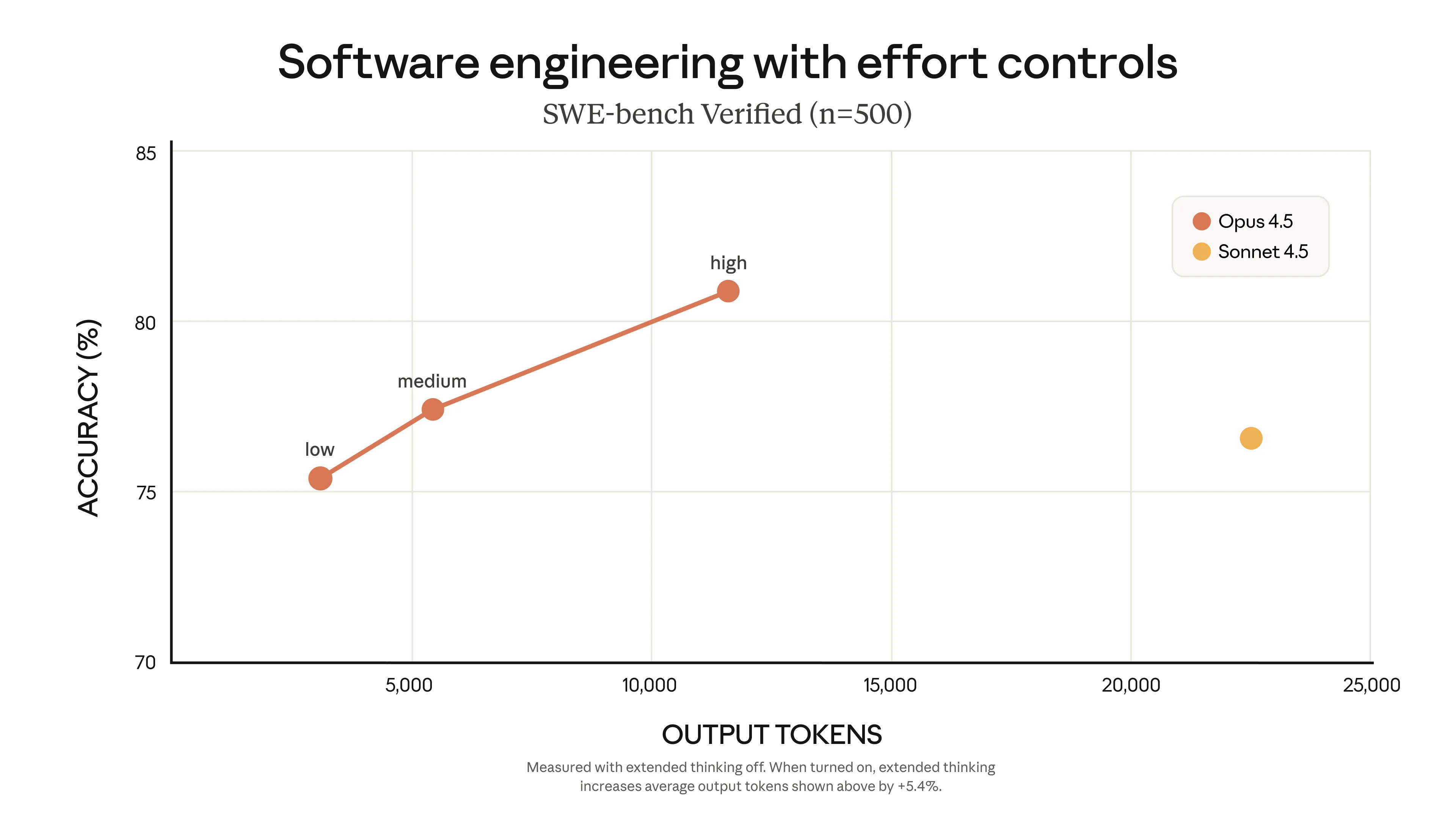

Along with this decrease in cost, the model also uses far fewer tokens to achieve the same solution as Sonnet, making it potentially cheaper than Sonnet for medium and high difficulty tasks.

Now let’s finally talk about quality. For coding, it is the first model I have seen that can compete with the raw intelligence that GPT 5.1 has. For general coding tasks, it matches or exceeds the ability of GPT 5.1, and has excellent instruction following capabilities. It still has the usual over eagerness for making additional changes, but it is easily mitigated by adding “Only make changes that are directly requested. Keep solutions simple and focused” to your prompt or CLAUDE.md instructions.

Its frontend ability out of the box is below Gemini 3, but with the frontend skill from Anthropic, it is able to match Gemini’s design capabilities.

Also, being a Claude model, it has an interesting personality and unique writing style, which coupled with the intelligence of Opus 4.5, make for a great model to chat with. Anthropic also has been working on reducing the model’s sycophancy, with Opus 4.5 having a 60% reduction when compared with Sonnet 3.5.

Also note that since Opus has better instruction following capabilities, you may need to update your prompts for it. To make this easy, Anthropic has made a guide and also a plugin for Claude Code to migrate your rules for you.

Image model wars

Flux.2

Black Forest Labs has returned after a long (for the AI world) break to release their Flux.2 series of models.

The Flux.1 series of models were strong for their time, and while their closed source models had moderate success, their open source options of Flux Dev and Schnell became the go to models for the open source community.

The Flux.2 models have 4 variants, Pro, Flex, Dev, and Klein.

The Pro model is their flagship model with the highest quality at a low cost. The Flex model allows you to take more control over model parameters, such as steps and guidance scale, while coming at a higher cost for this flexibility. Dev continues to be the distilled open source model, and Klein (which has not been released yet) is meant to be a smaller and faster version of Dev.

In terms of capabilities, the Flux.2 models all see a bug jump from their previous version. They have better image detail and photorealism, text rendering, prompt following, world knowledge, and can generate images up to 4MP (previously they had only been able to do around 1-2MP).

The Flux.2 models all also support image editing out of the box as well, allowing up to 10 images to be used as references.

For the Dev model, since it is open source, we know that the model sizes have increased, as Flux.2 Dev now has a 24 billion parameter text encoder (Mistral Small) and a 8 billion parameter diffusion model.

We will get into the comparisons with other models below, but before then, there is another new image generation model we need to introduce.

Z Image

It has been a while since we have covered something from Alibaba, but this week they have released a new open source image generation and editing model called Z Image (not to be confused with Z.ai, the makers of the GLM series of LLMs).

Z Image is meant to be a small, fast, well polished model for hyper-realistic image and text rendering. It has 6 billion parameters and uses Qwen3 4B as a text encoder and also a prompt expander.

It comes in 3 variants, turbo, which is the fully post-trained model, edit, which is an editing version, and base, which is the base pretrained model to use for finetuning. Right now only the Turbo model has been released, with the other two expected in the coming weeks.

If you want to see more examples of Z-Image, check out their gallery.

Comparisons against Nano Banana Pro

The usual sites that I use for head to head comparisons of models, LMArena and Artificial Analysis have not released scores for either model yet, so I will give the rough vibe check for what I have seen so far.

Fal has released a video doing a side by side comparison of the models, which is a good starting point for understanding the differences between the models.

Both models are very strong, and can compete with Nano Banana Pro in terms of quality, but fall short when you start getting picky about the details. Also when it comes to rendering large amounts of text or making infographics, neither come close to Nano Banana Pro.

Flux’s strong suit seems to be more artistic and stylized prompts, while Z Image is good at realism and text rendering, and is just okay at everything else.

| Model | $ per MP | Image Generation Time |

|---|---|---|

| Nano Banana Pro | $0.035 | 20 seconds |

| Flux.2 Pro | $0.012 | 10 seconds |

| Z Image | $0.005 | 1 second |

Z Image makes sense as the best model to run locally, due to its small size and thus fast speeds, even on lower end hardware. It will also be interesting to see what the community can do with finetuning to try and make the model good at other types of images other than hyper-realistic ones. It is also what I would reach for if I need to make realistic images quickly for cheap.

Flux is a Swiss Army Knife model, with good aesthetics and prompt following. Similar to Z Image, I think the community will be able to do a lot with this model for fine tuning, so I expect it to only get better over time.

Nano Banana Pro is for the most complex prompts, prompts that require reasoning or tool use, and also for making diagrams, slides, or any other text heavy content.

Quick Hits

Anthropic API Features

Along with the Opus 4.5 release, Anthropic also released some features for their API that enhance the model’s ability to use tools.

These features are:

- Tool Search Tool

- Programmatic Tool Calling

- Tool Use Examples

You can read more about what these are and how they help in this Tweet or on their blog. These features are only for the API right now, but will most likely also be available in Claude Code in the near future.

Can LLMs reproduce Astrophysics Papers

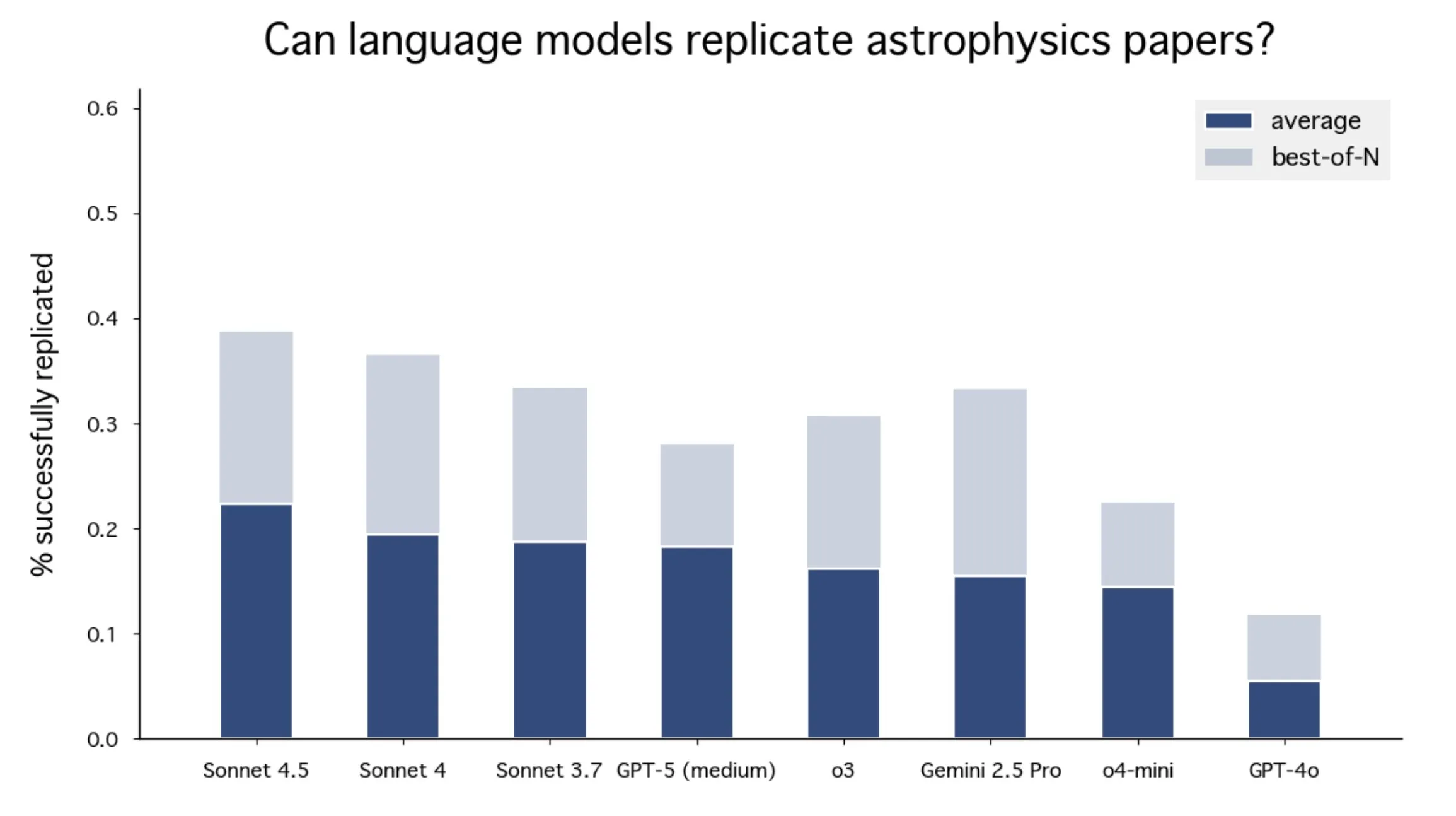

Astrophysicists from Stanford wanted to know how good LLM’s are at reproducing Astrophysics papers, since they tend to be very data analysis heavy and require little to no real world interaction once the data has been collected.

What they found is that LLMs struggle with this task, with the best models scoring under 20% on the benchmark.

You can read the whole setup for the evaluation and their take-aways from it in their paper.

NPM Malware

Just a PSA:

There was a supply chain attack on NPM where many popular packages had malware injected into them that would scrape and steal your API keys.

You can see if your code has been affected using this tool.

Finish

I hope you enjoyed the news this week. If you want to get the news every week, be sure to join our mailing list below.

Stay Updated

Subscribe to get the latest AI news in your inbox every week!