PICK YOUR SUPPORT STYLE

MONTHLY SUPPORT

Reader

$5/mo

Contributor

$15/mo

Architect

$50/mo

Recurring subscriptions auto-bill monthly via Stripe Checkout. Cancel anytime from the receipt email.

AI Espionage and GPT 5.1

Claude Code gets used for a massive hacking operation, GPT 5.1 is released, OpenAI fights the NYT over data usage, and GPU hyperscalers are in trouble.

tl;dr

- Claude Code gets used for a massive hacking operation

- GPT 5.1 is released

- OpenAI fights the NYT over data usage

- GPU hyperscalers are in trouble

News

Anthropic Stops AI Espionage

This week Anthropic announced they have disrupted a large-scale AI cyberattack, the first of its kind.

The attackers, who Anthropic believe with “high certainty” were a Chinese state sponsored group, used a jailbroken version of Claude Code to target 30 entities around the world, including large tech companies, financial institutions, chemical manufacturing companies, and government agencies.

Jailbreaking an LLM means to bypass the safety measures and restrictions that are built into the model, allowing it to perform actions that it normally wouldn’t be allowed to do. This can include accessing sensitive information, performing unauthorized actions, or bypassing ethical guidelines. This is usually done with sophisticated prompt engineering.

The attack, which occurred in mid September, utilized Claude Code with custom MCP servers and tools as the orchestrator for a larger system, allowing it to do reconnaissance, initial access, persistence, and data extraction phases all with minimal human interaction. According to Anthropic, Claude was able to do 80-90% of the tasks fully autonomously, with humans mostly being used in a supervisory role.

Anthropic released a full thirteen page report on the incident, which goes into detail how Claude was used to orchestrate these attacks.

Anthropic says they will work on their classifiers behind the scenes to detect this kind of behaviour earlier on, so that future attacks can be prevented.

Even with Anthropic beefing up their protections, we will only be seeing more of these attacks in the future, as other model providers roll out models of equal or greater strength.

This I can see is the main reason for being against open source AI. For instance, if these actors go and use a self hosted version of GLM 4.6, an open source model that is of about equal quality to Sonnet 4, which these hackers used (Sonnet 4.5 was released in late September), then the attacks would not be able to be “turned off” by anyone. I am still all for open source AI and think its a net positive, but saying its entirely fault free is incorrect.

Releases

GPT 5.1

OpenAI has released an update to GPT 5, GPT 5.1.

This update is not focused on raw intelligence and benchmark scaling, but rather on the intangibles and more day to day qualities of the model.

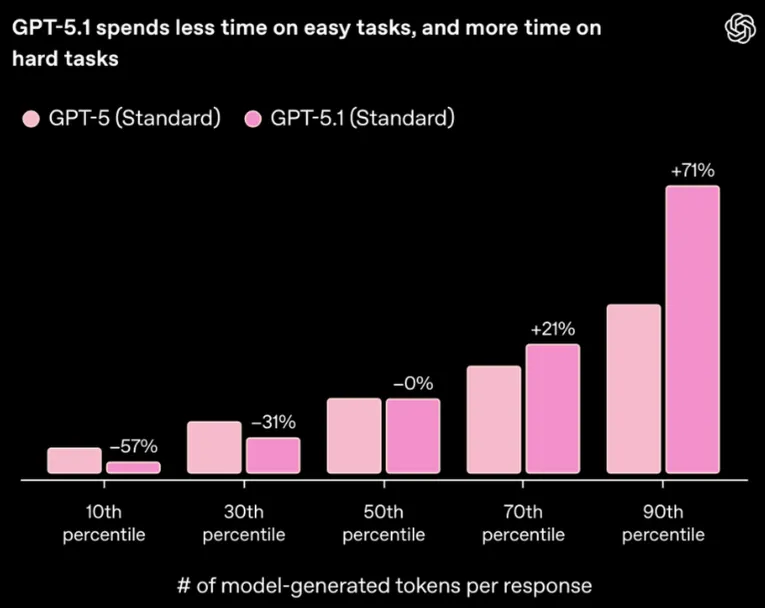

The first highlight is the dynamic reasoning capabilities, something that it inherited from the GPT-5-Codex models. This means that the model will think less for easy queries and more for complex ones.

The next have to do with the model’s personality, which is now supposedly more friendly. They have also shipped multiple new personality types that you can choose from.

Speaking of model outputs, the model should be more concise and clear with its responses, and is more steerable with system prompts. For instance, you can tell it to not use em dashes anymore and it will actually listen. This is mostly due to the model’s instruction following capabilities being improved.

They also released Mini and Codex variants of the model as well which seem to have similar characteristics.

I had previously said that GPT-5 was the strongest model out there right now, and this update provides a small but substantial bump to the model’s capabilities.

Quick Hits

Open AI Fights For User’s Data

OpenAI continues to fight the New York Times in court, as their lawsuit over data usage continues.

This week, The Times filed a request for 20 million ChatGPT conversations, despite not being narrowed down in any way for relevance for the case.

OpenAI is obviously fighting this, saying “courts do not allow plaintiffs suing Google to dig through the private emails of tens of millions of Gmail users irrespective of their relevance”. Hopefully the judge sees this request as the ridiculous overstep of privacy that it is and denies the claim.

GPU Hyperscalers are in trouble

There has been a lot of talk recently on Twitter about GPU hyperscalers and the economics around them. Usually the commentary is from analysts and outsiders who are not directly involved in the space.

The main question is the economics, with 4 year depreciation cycles for the hardware, GPU hyperscaler’s margins are looking very thin, even with massive growth in AI and the future.

This week, one of the GPU providers, HotAisle, weighed in on the conversation, painting a grim picture of the economics for themselves and the rest of the industry.

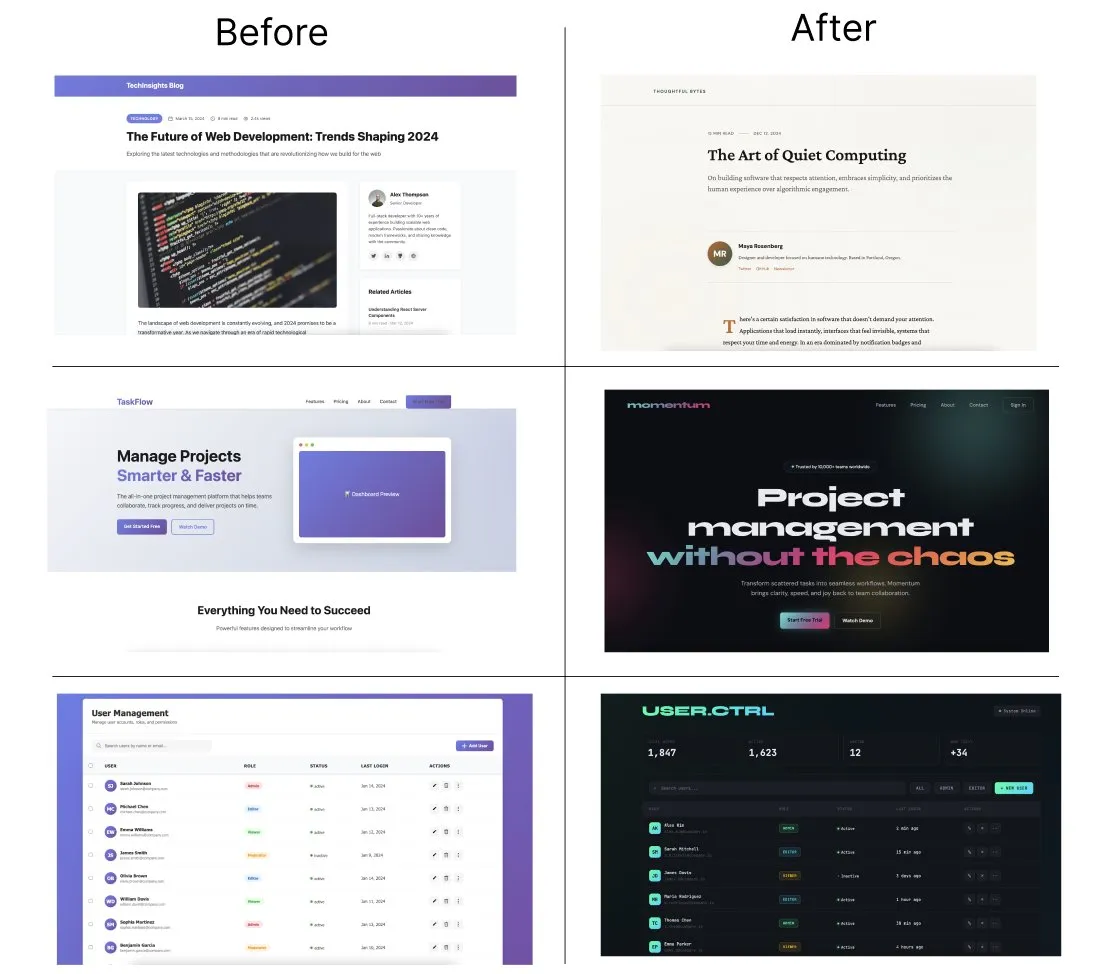

Frontend Claude Skill

Anthropic has released a Claude skill that is made to prevent your frontends from looking like the usual AI UIs that we are used to seeing (purple gradients are everywhere now). Just drop this file into your Claude Skills folder and your frontends will get a free quality boost.

Finish

I hope you enjoyed the news this week. If you want to get the news every week, be sure to join our mailing list below.

Stay Updated

Subscribe to get the latest AI news in your inbox every week!